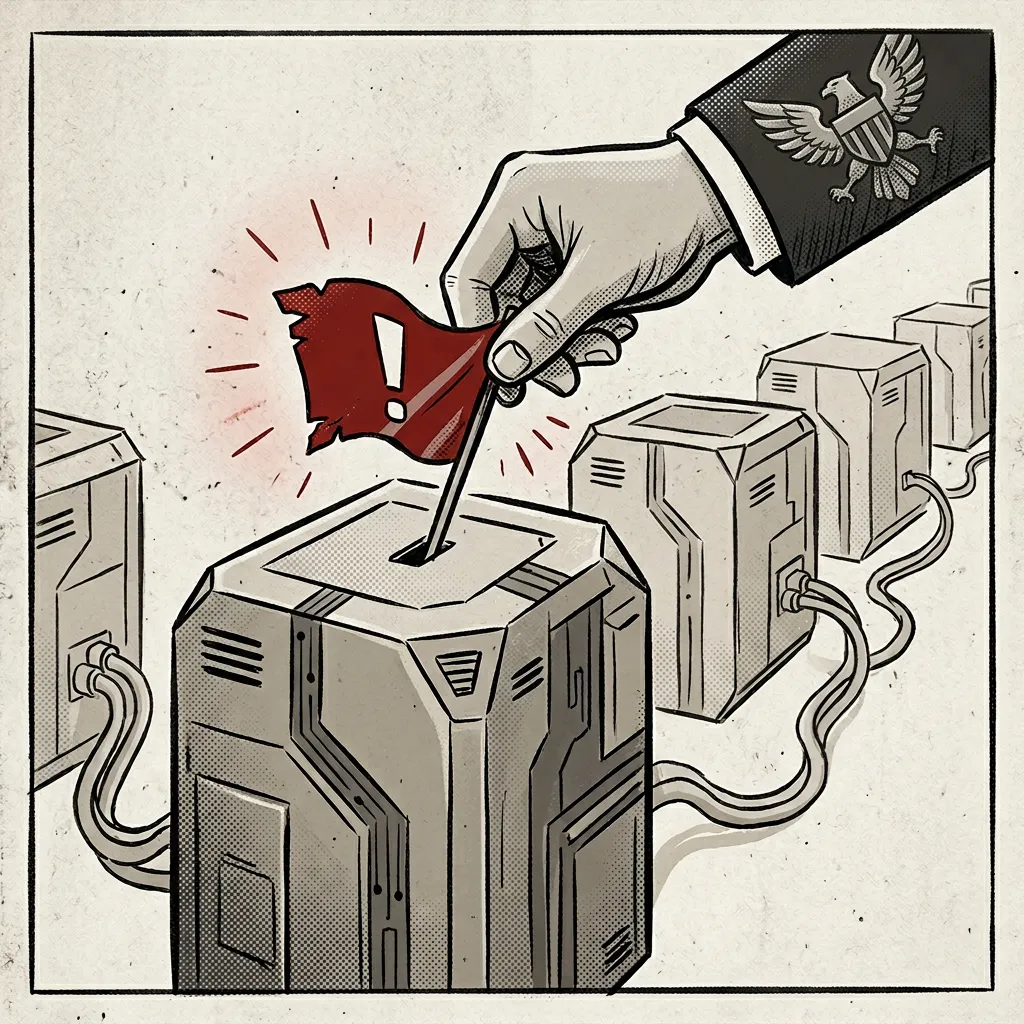

According to reports, the White House is developing guidance that would allow government agencies to bypass safety restrictions, known as "risk flags," placed on new artificial intelligence models by the company Anthropic. This reported move follows a prior disagreement between Anthropic and the U.S. Department of Defense earlier this year.

The Indian publication The Hindu provides specific context for the reported guidance, linking it directly to a previous dispute. The outlet reports that Anthropic faced a "fallout" with the Pentagon after the company declined to remove its AI's built-in safeguards. These safeguards, or "guardrails," are designed to prevent the technology's use for developing autonomous weaponry or for domestic surveillance operations. The implication in this framing is that the new White House guidance is a response aimed at overcoming such corporate-imposed limitations on military and security applications.

In contrast, the report from Singapore's Channel News Asia (CNA) presents the development in a more general business and policy context, without detailing the specific history with the Pentagon. CNA's brief report, citing Axios, states the core fact of the drafted guidance but does not elaborate on the motivations or the specific nature of the safety flags involved. This framing presents the story primarily as a regulatory development in the AI sector.

The core factual claim—that guidance is being drafted to bypass Anthropic's safety protocols—is consistent across both sources. However, The Hindu explicitly connects this action to a concrete prior event (the Pentagon dispute) and specifies the prohibited uses (autonomous weapons, domestic surveillance), providing a narrative of governmental pushback against corporate ethics policies. CNA's report, while not contradicting this, offers a more neutral, standalone policy update.

Neither source comments on the validity or wisdom of the reported guidance, sticking to a reporting stance. The divergence lies in the depth of contextual framing and the implied narrative about the U.S. government's relationship with AI ethics safeguards.