White House Seeks Workaround for AI Safety Protocols

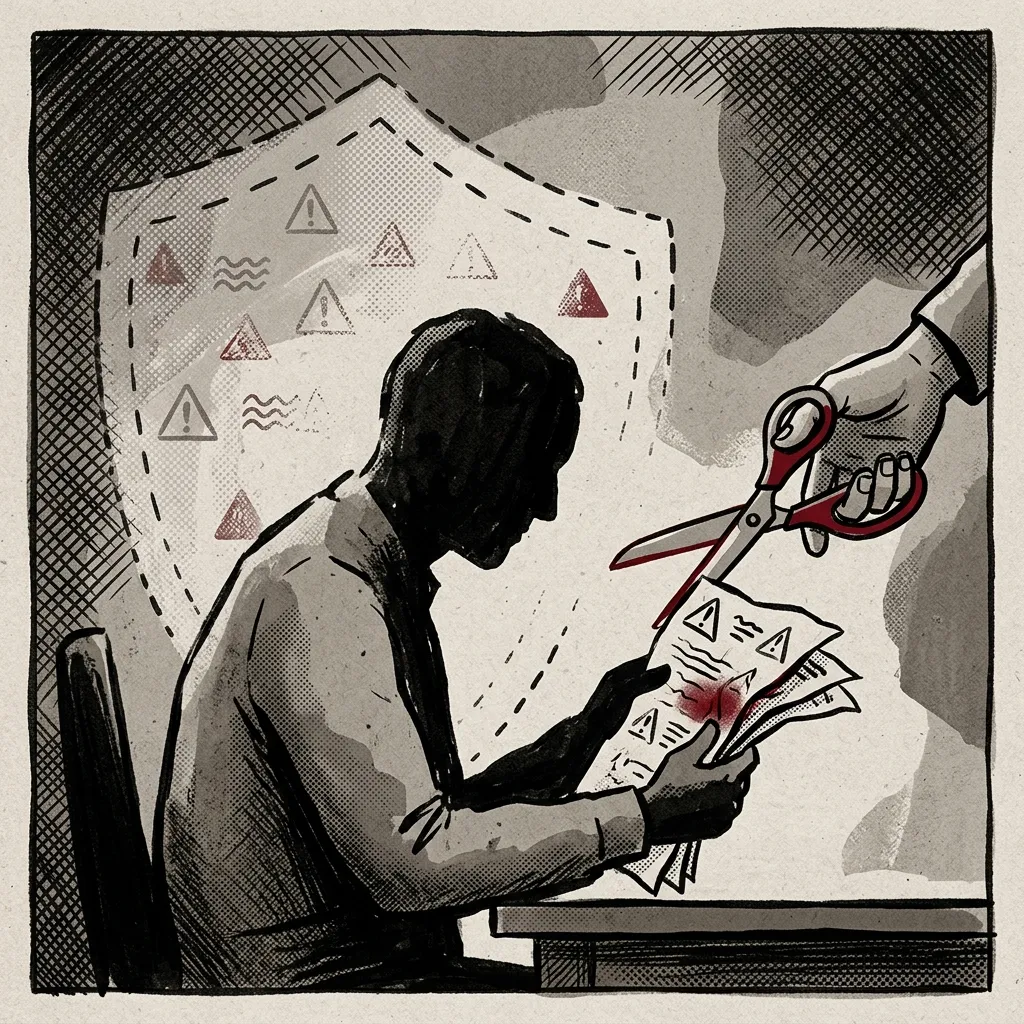

The White House is reportedly drafting guidance that would allow government agencies to bypass safety warnings issued by artificial intelligence company Anthropic for its AI models, according to reports from multiple outlets citing Axios.

The development marks a significant tension point between federal government operational needs and the safety frameworks established by one of the leading AI companies. Anthropic, known for its emphasis on AI safety research, implements risk flags designed to prevent its models from being used in potentially harmful applications.

Context of Pentagon Tensions

According to The Hindu, this guidance effort follows earlier friction between Anthropic and the U.S. Department of Defense. The Indian publication reports that Anthropic "faced a fallout with the Pentagon earlier in the year" after the startup declined to remove protective measures that prevent its AI systems from being deployed for autonomous weapons development or domestic surveillance operations.

The Hindu frames these guardrails as deliberate safety features that Anthropic refused to compromise, positioning the company as maintaining its ethical stance despite pressure from a major government client. The publication characterizes the Pentagon episode as a "fallout," suggesting significant disagreement between the parties.

Limited Details on Guidance Scope

Channel News Asia's coverage confirms the existence of the White House guidance initiative but provides minimal detail about its scope, implementation timeline, or specific safety flags targeted. The Singapore-based outlet attributes the information to Axios reporting without elaborating on the technical or policy implications.

Neither source specifies which particular Anthropic safety protocols the guidance would affect, whether the bypass mechanism would apply to all government agencies or specific departments, or what oversight mechanisms might govern such exceptions. The reports also do not indicate whether Anthropic has been consulted in developing this guidance or what the company's response has been.

Broader Implications for AI Governance

The reported guidance development raises questions about the balance between national security interests and AI safety protocols established by private companies. Anthropic has positioned itself as a leader in developing "constitutional AI" systems with built-in safety constraints, making this reported circumvention particularly notable.

The timing follows growing government interest in AI capabilities across defense, intelligence, and administrative functions. However, the specific applications the White House envisions for bypassing Anthropic's safety flags remain unclear from available reporting.

The Hindu's mention of autonomous weapons and domestic surveillance as specific areas where Anthropic maintained its guardrails provides context for what types of applications might be at stake, though the current guidance may address different or broader use cases.

Unanswered Questions

Key details remain unreported across both sources: the legal authority under which such guidance would operate, whether other AI companies face similar pressure, how Anthropic's commercial relationship with government agencies might be affected, and what technical mechanisms would enable the bypass of safety flags.

The story highlights an emerging governance challenge as governments seek to leverage advanced AI capabilities while companies implementing safety measures face pressure to accommodate national security priorities. How this tension resolves could set precedents for AI deployment in sensitive government applications globally.