Seven separate lawsuits have been filed in a California court against the artificial intelligence company OpenAI and its chief executive, Sam Altman. The legal actions were initiated by relatives of those killed in a mass shooting at a school in Canada, described by one source as one of the country's deadliest such incidents.

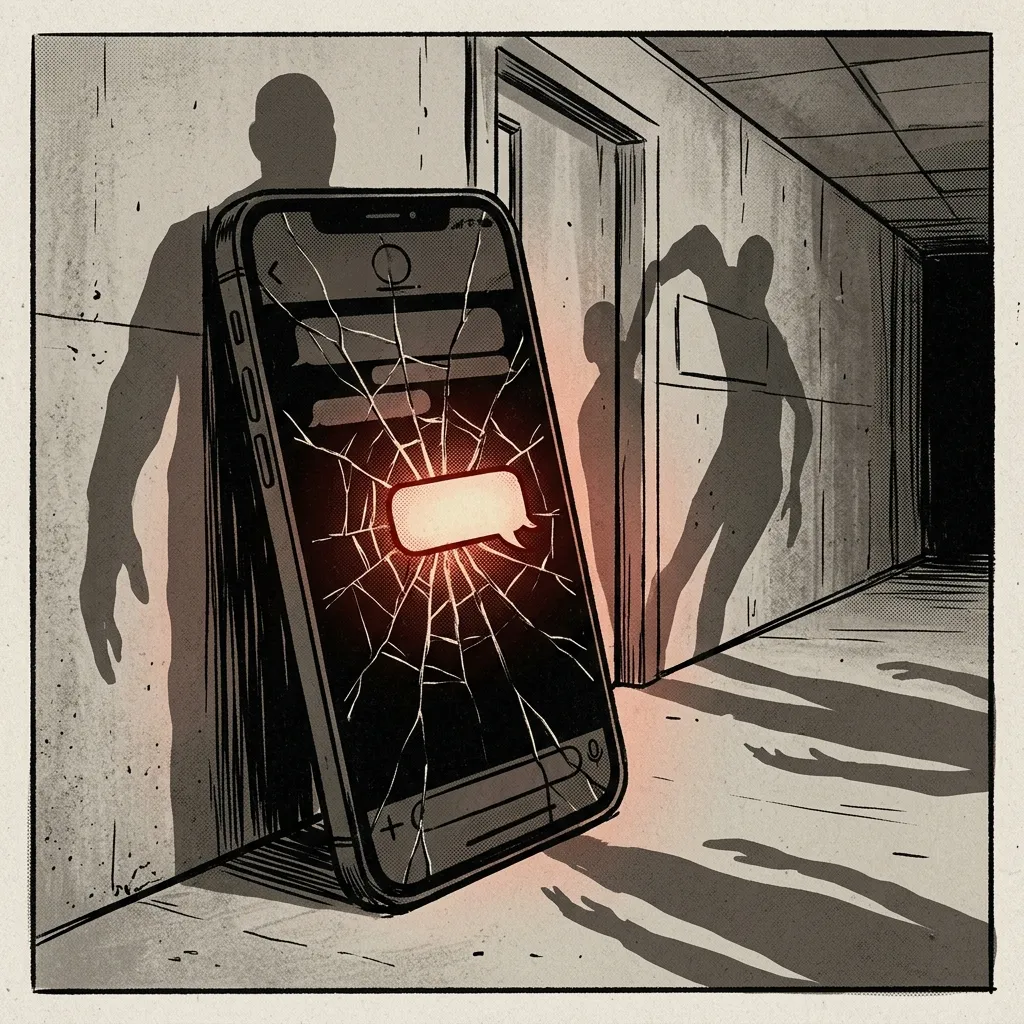

The core allegation, as presented in the court documents, is that the company acted negligently. The plaintiffs argue that OpenAI failed to properly monitor or flag concerning activity from the suspected shooter within its ChatGPT platform. By not identifying and reporting this activity to law enforcement authorities, the lawsuits claim the company effectively abetted the planning and execution of the attack. The legal complaints suggest this omission prevented a potential intervention that could have averted the tragedy.

The cases highlight a growing legal and ethical frontier concerning the responsibilities of AI developers. The lawsuits position OpenAI not merely as a technology provider but as an entity with a duty to proactively detect and report threats formulated using its tools. This frames the company's role as extending beyond platform neutrality to active content moderation and public safety enforcement.

Both reports focus on the plaintiffs' perspective and the novel nature of the legal claim, centering on a purported duty to alert police. The cases are presented as a direct challenge to standard industry practices, where user interactions with generative AI are typically considered private and not actively monitored for criminal intent. The outcome could set a significant precedent for liability standards across the technology sector.